How would you like a camera that could totally eliminate red eye and even better not need any flash at all? Kodak is working on that. They are developing a sensor that will read not only the color but also the brightness of what you are shooting. The new technology will be implemented in cameras they release in 2008.

Kodak’s New Sensor May Eliminate Flash: Dory Devlin for Yahoo Tech

Here’s how it would work: The new technology would increase light sensitivity of existing image sensors by two to four times. That means a camera’s shutter speed could increase, which would reduce camera shake and blurring problems. If it works, it also would allow photographers to shoot in low light without producing grainy, speckled photos.

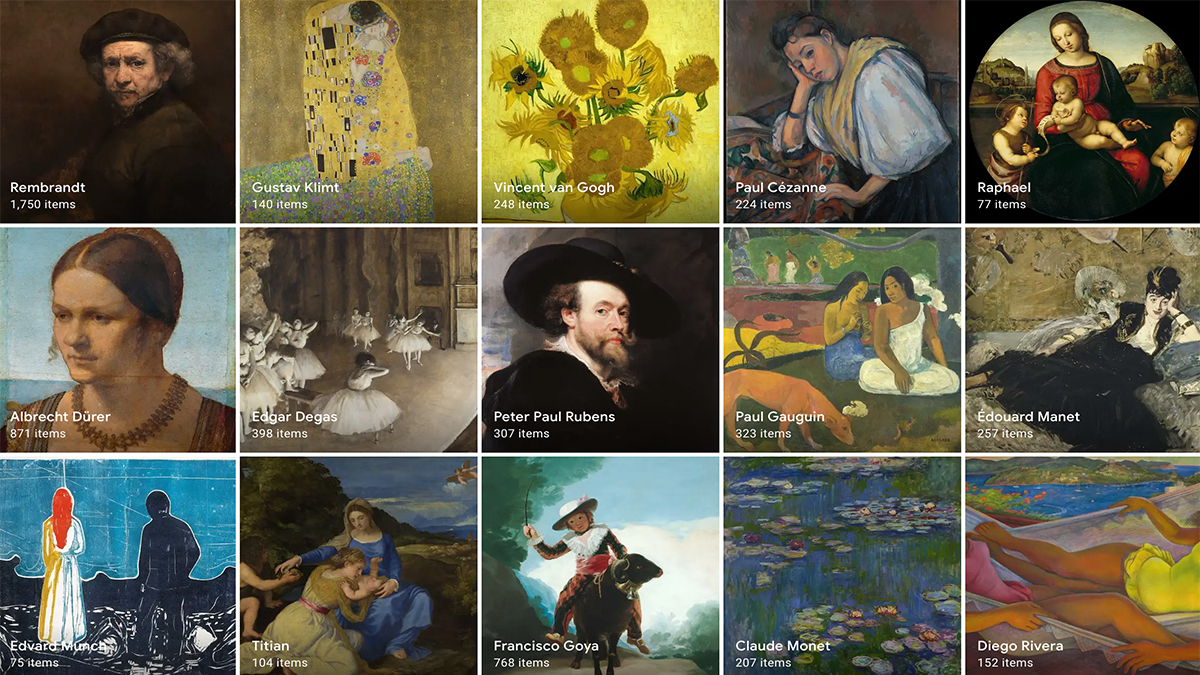

The proof is in the pixels. In most digital cameras, each sensor pixel detects either the color red, green, or blue and places them into a pattern named for Bryce Bayer, the Kodak engineer who developed it. With the new high-sensitivity technology, half of the pixels will be panchromatic, or clear, so they will capture only the brightness, not color. That means a 12-megapixel camera would have 6 million panchromatic pixels, 3 million green pixels, 1.5 million red pixels, and 1.5 million blue pixels. In comparison, today’s 12-megapixel cameras have 6 million green pixels, 3 million red, and 3 million blue.

Leave A Comment